The Football Fortune Tellers

It was of course a public relations gimmick by Goldman Sachs and PricewaterhouseCoopers to ‘predict’ the outcome the 2014 FIFA World Cup. Their best and brightest looked into their crystal footballs at historical data and created statistical models that foresaw what has recently transpired.

Let’s take a look at what they claimed before the World Cup and what really happened in Brazil in order to answer the question: Were they right?

Goldman Sachs

Unless you have been hiding in a cave with a reindeer hide draped over your eyes and two chicken legs wedged in your ears the last few years, you have probably heard about Goldman Sachs and their involvement in the global financial crisis. Naturally, they wanted to boost their public image and turned to football to show off their fortune telling skills.

Goldman Sachs’s World Cup and Economics 2014 report consists of a stochastic model that included data from international football matches since 1960. The analysts built a regression model that predicted the number of goals scored, which they had assumed to be taken from a Poisson distribution1, based on a number of factors:

- differences in Elo rankings between teams;

- average number of goals scored in the last ten international matches;

- average number of goals scored against in the last five international matches;

- whether the game took place at a World Cup;

- whether the team played in their home country;

- whether the team played on their home continent.

Why they only considered the last ten games for goals scored but only the last five games for goals conceded is not elucidated.

Their regression model was fed to a Monte Carlo simulator and run 100,000 times for each World Cup match. What’s striking is their prediction that 33 out of 48 group matches would have ended in draws (all 1–1), that’s 68.75% of all group matches! Consequently, Goldman Sachs calculated that no match would end in a draw without any goals.

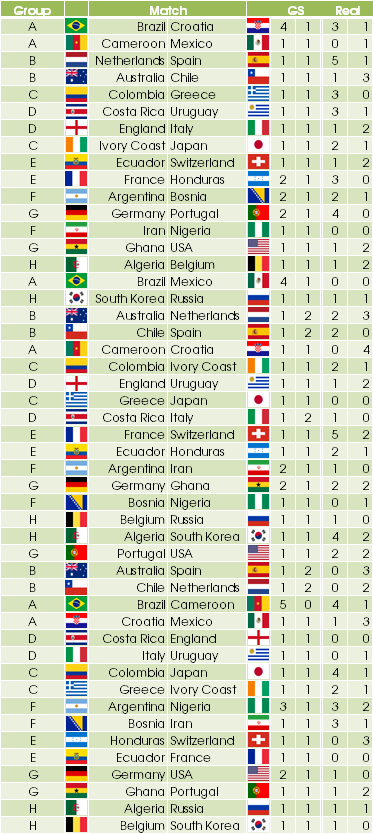

The results of the group matches at the 2014 FIFA World Cup including the predictions by Goldman Sachs are shown in the table below.

Additionally, what they found is that the home advantage is a key predictor of international football matches, which adds an extra 0.4 goals scored per match. Furthermore, the home continent advantage for Latin American teams is statistically significant.

Their top 4 for the World Cup were 1) Brazil, 2) Argentina, 3) Germany, and 4) Spain. Ladbrokes’ odds favoured Brazil as the winner too but only with a 25% chance of victory, whereas Goldman Sachs listed almost 50%. A similar figure was predicted by Wolfram Research.

The economists at Goldman Sachs did note that “the predictions […] are very uncertain because football is a low-scoring and unpredictable game.” Way to make a predication and immediately back down!

PwC

PwC created their World Cup Index based on obvious metrics:

- current form as indicated by current FIFA rankings (35%),

- home (continent) advantage (30%),

as well as somewhat less obvious ones:

- football tradition, based on whether a country bid to host a World Cup at least three times and whether the country is European or South American (15%),

- the number of registered football players (10%),

- football interest as indicated by club attendance (10%).

The weights of each variable are shown in parentheses.

They ‘discovered’ that a host country progresses on average two more rounds thanks to the home advantage. Moreover, the GDP per capita does not significantly impact a country’s performance. One of my favourite lines, though, is that “having a large population does little to enhance footballing performance if it does not produce a larger pool of players from which to choose”. Why, I never! Another gem is that “performance in the last two World Cups was significant in explaining current performance”. Who would have thought that recent performance impacts current performance?!

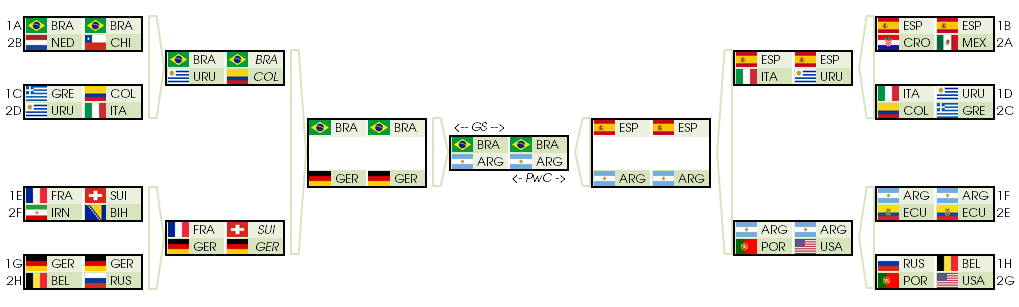

PwC’s top 4 was identical to Goldman Sachs’s. Both ranked Germany above Argentina, but based on the results of the group matches, Germany was most likely to play Brazil in the semi-finals, as shown in the figure below.

However, they too withdrew their bold claims on the last pages by saying that “on its own [our top-down model] cannot provide robust predictions of the likely outcomes in Brazil”.

Reality

As we now know, it did not quite go as thought. Let’s break it down by the different stages.

Group Stage

Goldman Sachs predicted the correct winner in 11 out of 48 matches, which accounts for 22.92% of all group matches. In only 3 games (6.25%) did they anticipate the final score, and they had computed the correct number of goals scored by both teams for just 6 matches (12.50%).

The statistical model by Goldman Sachs did fare much better than a completely random assignment of wins, losses, and draws. The probability of randomly choosing the correct overall result for all 48 games is 1 in 348, which for all practical purposes is zero.

There have always been goalless draws in group matches in the most recent championships. At the 2010 FIFA World Cup in South Africa I counted six group matches that ended in 0–0. Similarly, in 2006 in Germany there were five such group matches, and in the 2002 World Cup hosted jointly by South Korea and Japan two matches ended without cheers from the fans of either team that played. In Brazil we recently saw five times a 0–0 on the scoreboard as the teams left the pitch. Goldman Sachs completely missed those matches.

What is more, the number of group matches that ended in a draw was 11 in 2006 and 12 in both 2010 and 2012. 2014 was no exception: 9, of which two ended in 1–1 and another two in 2–2.

According to the BBC the 2014 World Cup seemed to have had the “statistically greatest” group stage at any World Cup. They based their conclusion on the mean number of goals per match and the percentage of games ending in a win. Whether these point estimates were significantly outside an acceptable confidence range was not mentioned, and I very much doubt it. A grand epithet as “statistically greatest” seems therefore hardly justified, methinks. But I do understand that it may have caused a click or two.

Knockout Stage

To everyone’s surprise, Spain did not make it through the group stage. I doubt that anyone could have guessed that, no matter how fancy their models. Spain, as the then-acting world champion, won the last three friendly matches before the championship, and this year’s Champions League final was between two Spanish teams: Real Madrid vs Atlético Madrid. Italy’s and Portugal’s early exits came as a surprise to most, including of course Goldman Sachs and PwC.

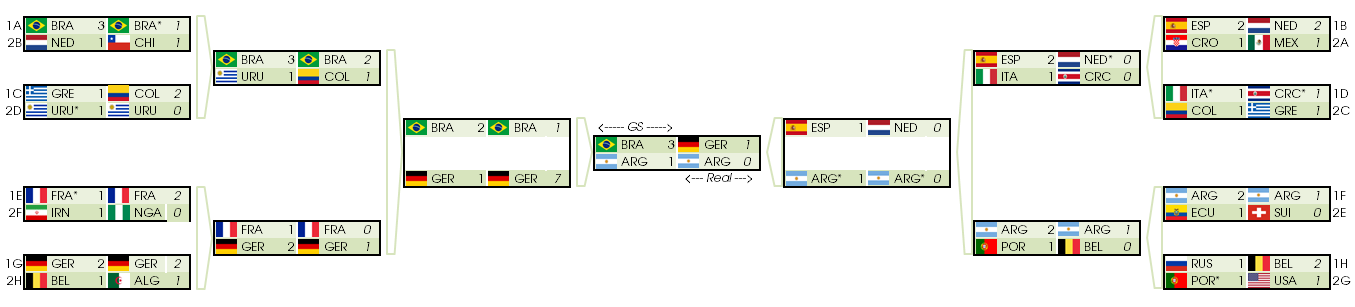

The figure above is the comparison of Goldman Sachs’s predictions (left in each box) and how it really happened (right in each box) for the knockout stage. If there is an asterisk (*) next to a country abbreviation, it means that the game ended in a draw after extra time but the country marked with an asterisk moved on to the next round after penalty kicks.

As you can see, Goldman Sachs correctly predicted 9 out of the final 16 contestants (56.25%), whereas PwC did slightly better with 11 (68.75%). Goldman Sachs’s performance is not stellar if you consider that you could have predicted 7 out of 16 right had you only looked at countries that had previously reached the semi-finals in all but the most recent World Cup, which obviously ignores both the home advantage of South American teams and the more recent performance.

For the quarter-finals, Goldman Sachs correctly listed 4 out of 8 teams, as did PwC.

For the semi-finals, both Goldman Sachs and PwC managed to get the same 3 out of 4 right (75%): Argentina, Brazil, and Germany. The Netherlands was the odd one out.

When it comes to the final, Goldman Sachs and PwC foreboded Argentina as one of two finalists but not Germany.

Summary

Goldman Sachs and PwC were more clairvoyant than a random selection of winners and losers. Their statistical models were, however, not much better than simply looking at countries who had reached the semi-finals in all World Cups before the 2014 World Cup in Brazil. Goldman Sachs’s match results were generally way off, and the number of draws was significantly lower than what the sciolists had seen in their crystal balls. In fact, the number of draws was in line with the three most recent World Cups, which indicates a systematic flaw in the GS model.

Most importantly, PwC’s and GS’s models failed to predict the correct winner. In fact, their favourite, Brazil, ended in fourth place. Before the championship, Goldman Sachs computed Brazil’s probability to take home the prize to be 0.5. After the group games had been played, they updated their portal with ‘improved’ predictions. The latest model listed Brazil and the Netherlands as the two finalists, even though they had originally considered Argentina a finalist (correct) and Germany a semi-finalist with better odds than Argentina to win the world cup (also correct). Again, they had calculated Brazil to be the winner. Goldman Sachs refused to comment on their newest prognostications. As we now know, with good reason.

In their defence, they were of course not as far off as their colleagues at ING who, apart from misspelling Colombia, predicted that Spain would remain the world champion based on the total market value of their squad. It really seems that economists know the price of everything but the value of nothing. By the way, there goes the efficient-market hypothesis…

What is more, economists rarely go out on a limb to make predictions contrary to popular belief and thus risk looking like fools when the chips are down. When they’re wrong, and they sometimes are, they can relax, take a ship from their caipirinhas, and dismiss any accusations of wrongdoing by saying that everyone else thought the same thing too.

Interestingly, Microsoft’s Cortana was spot on for all knockout games.2 Cortana correctly named the semi-finalists ahead of the quarter-finals. The somewhat surprising final of Germany vs Argentina was also foretold by Cortana, although it could not imagine the magnitude of Brazil’s 7-1 epic whooping by Germany, after which the Netherlands added insult to injury by beating Brazil 3-0 in the match for the third place. Cortana’s favourite for the final was Germany, who beat Argentina 1-0 in extra time. Well done, Cortana!3

Cortana admittedly adjusted its predictions based on recent match statistics at the World Cup, as it had favoured Brazil as the winner before start of the World Cup, so it is not entirely fair to compare Cortana’s short-term predictions with the work of the numerologists at Goldman Sachs and PwC. It also goes to show that not all data needed to ‘compute’ the winner of the World Cup was available at the beginning of the tournament. Nevertheless, the good people at Goldman Sachs did brag about their model’s retrodictive performance, which they had obviously mistaken for an indicator of its predictive qualities, so I do believe I am right to point out their failures.

All in all, I think Goldman Sachs and PwC have demonstrated that it’s generally easier to predict the past than it is to predict the future. Microsoft’s machine learning approach was clearly superior to the relatively simple statistical models employed by the economists. The power of Microsoft’s approach obviously came from tweaking predictions based on recent information, such as match statistics and weather conditions.

The performance of Goldman Sachs astrologists did not go unnoticed though. Ben Geier at Fortune wrote that Goldman Sachs was only “so-so at World Cup predictions”, and Adam Button of ForexLive took it one step further to say that they were “as bad at predicting the world cup as [they are] at predicting markets.” Ouch!

-

The idea that scoring goals is the combination of three independent Poisson processes (i.e. random effects, team fitness, and match-specific effects such as temperature, injuries, red cards) comes from a paper by a trio of scientists who looked at 20 years of statistics for the German Bundesliga. ↩

-

Besides historical performance in international matches and the home (continent) advantage, the Bing Predictions machine learning engine that flips Cortana’s tarot cards also took weather conditions and the hybrid grass playing surface into account. For the group rounds, Cortana’s performance was not as glorious, as it achieved an accuracy of ‘only’ 60.42% (29 out of 48 matches). ↩

-

Siri and Google also chimed in to try and nab a bit of publicity from their rival. Apple’s and El Goog’s predictions were, however, not as juicy as Cortana’s. ↩