Roads to Quantum Advantage

When can we expect quantum computers to be able to solve real problems more efficiently than classical computers?

Quantum advantage is when quantum computers become faster than classical computers at solving relevant, real-world problems. Quantum supremacy is when quantum computers process a specific problem faster than classical computers. Google’s 2019 quantum supremacy claim has been disputed by IBM, downplayed by John Preskill, and ultimately disproven by a tensor slicing algorithm that simulates quantum computers on classical hardware more efficiently.

While Google’s achievement is a true milestone in the noisy intermediate-scale quantum (NISQ) era, quantum supremacy is tailor-made for making quantum computers look better than they generally are. That is why it is more useful to look at the more general quantum advantage, that is, when quantum computers become generally useful and advantageous to solve complex problems. This is sometimes referred to as broad quantum advantage as opposed to a more narrow quantum advantage for individual use cases.

The point at which that happens is thought to be around 70–75 logical (or algorithmic) qubits. At 70 logical qubits, quantum algorithms can explore state spaces of 36 quintillion floats. The number of physical qubits required depends on the error correction scheme used. In some cases, the physical-to-logical ratio can be as high as 1,000:1, although for trapped ions lower figures (e.g. 13:1) have been reported on current QPUs. Still, to achieve quantum advantage we need around 10,000 qubits.

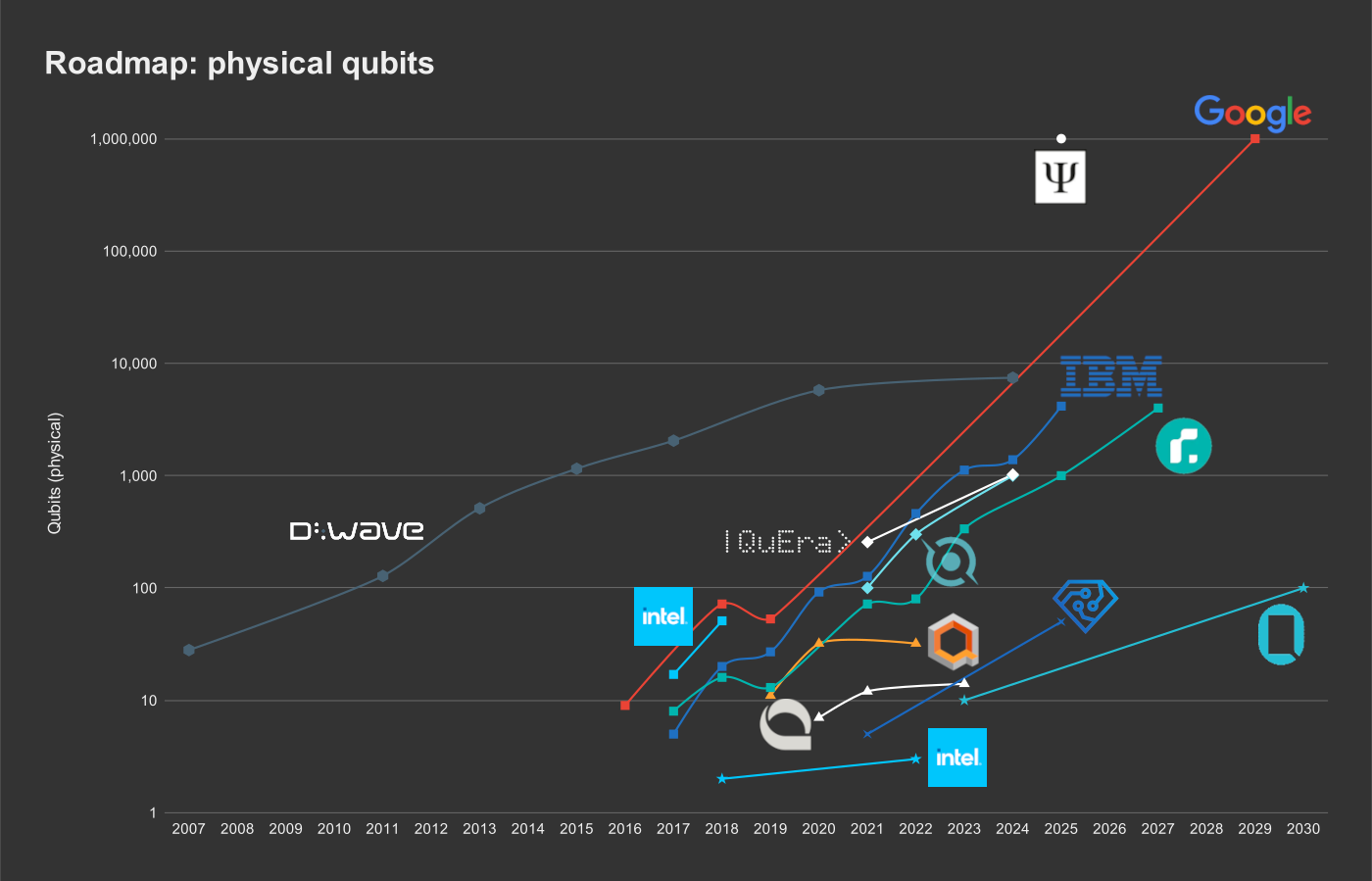

In what follows, we shall compare figures from existing and planned quantum computers for physical and logical qubits. The different technologies are indicated as follows:

- Superconductors: square (■)

- Trapped ions: triangle (▲)

- Photonics: circle (●)

- Spin qubits in diamond (NV centres): × mark

- Spin qubits in semiconductors (quantum dots): star (★)

- Neutral atoms: diamond (◆)

- Annealers: hexagon (⬢)

More details and references are available in Quantum Computing Roadmaps, where I present the data in tabular form.

Physical qubits

Both Google and PsiQuantum are outliers in their predictions to have 1m physical qubits by the end of the decade. What is more, PsiQuantum has yet to prove their design at any scale, and Google’s extrapolation is iffy at best, as their current generation of chips merely have 53 (Sycamore) and 72 (Bristlecone) functioning qubits.

From the chart, it is clear that the majority of manufacturers that publish their quantum computer roadmaps expect to reach quantum advantage by 2030–2035.

We obtain roughly the same result when we apply Rose’s Law, which is the equivalent of Moore’s Law for quantum computers, to the entire industry and set the current level at 50 qubits. With that, we are about 8 years from 10k qubits, which is 2030 or thereabouts.

Both ColdQuanta and Pasqal (not shown) have roughly the same near-term roadmap: 1,000 qubits based on neutral atoms by 2024, although very little is known about the performance, quality, and general commercial availability of their current 300-qubit systems.

Note that quantum annealers are really separate, because D-Wave’s approach is to have lots of qubits to compensate for their faster decoherence, which is often several orders of magnitude worse than competitors. These machines are designed for specific optimization problems.

While IonQ appears to flatline, their progress is best measured in logical qubits, for which they publish their roadmap.

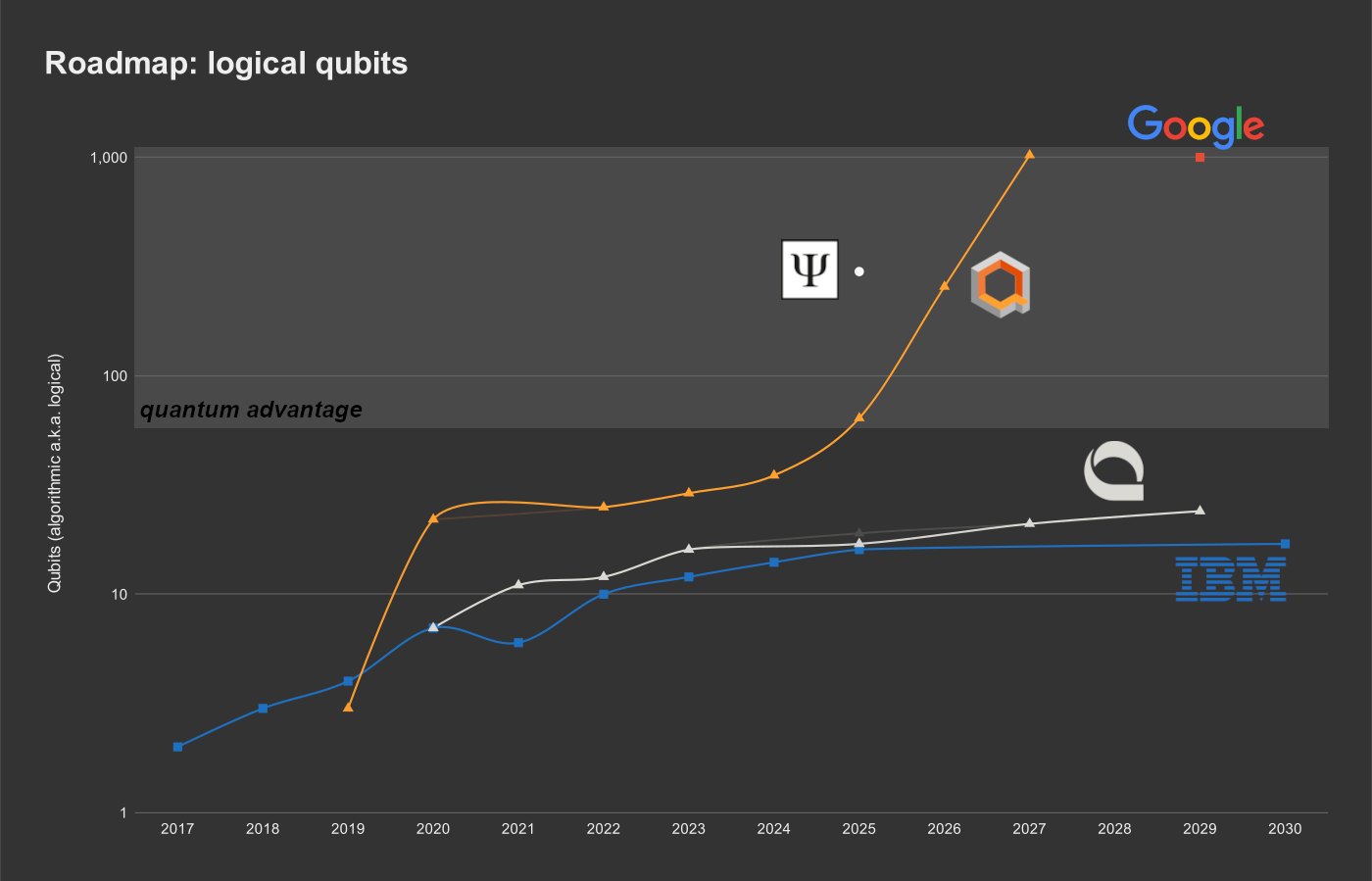

Logical qubits

Logical, or algorithmic, qubits are in many instances not published. However, the algorithmic qubits can be inferred from the quantum volume, which is occasionally listed. The number of algorithmic qubits is the base-2 logarithm of the quantum volume in the absence of error-correction encoding.

The quantum volume is a metric of interest to the NISQ era, in which the difference between physical and logical qubits can be quite large due to qubit connectivity and gate fidelity, or rather the lack thereof. It assumes square lattices of qubits, and it measures the number of qubits multiplied by the number of layers (circuit depth).

Again, PsiQuantum expects to reach quantum advantage way earlier than anyone else. IonQ expects significant improvements to reach quantum advantage in a few years, whereas both IBM and Quantinuum expect that to happen after 2030, and probably closer to 2040. Note that IBM are exemplary in the publishing of characteristics for each generation of their quantum hardware. This adds more weight to their estimates.

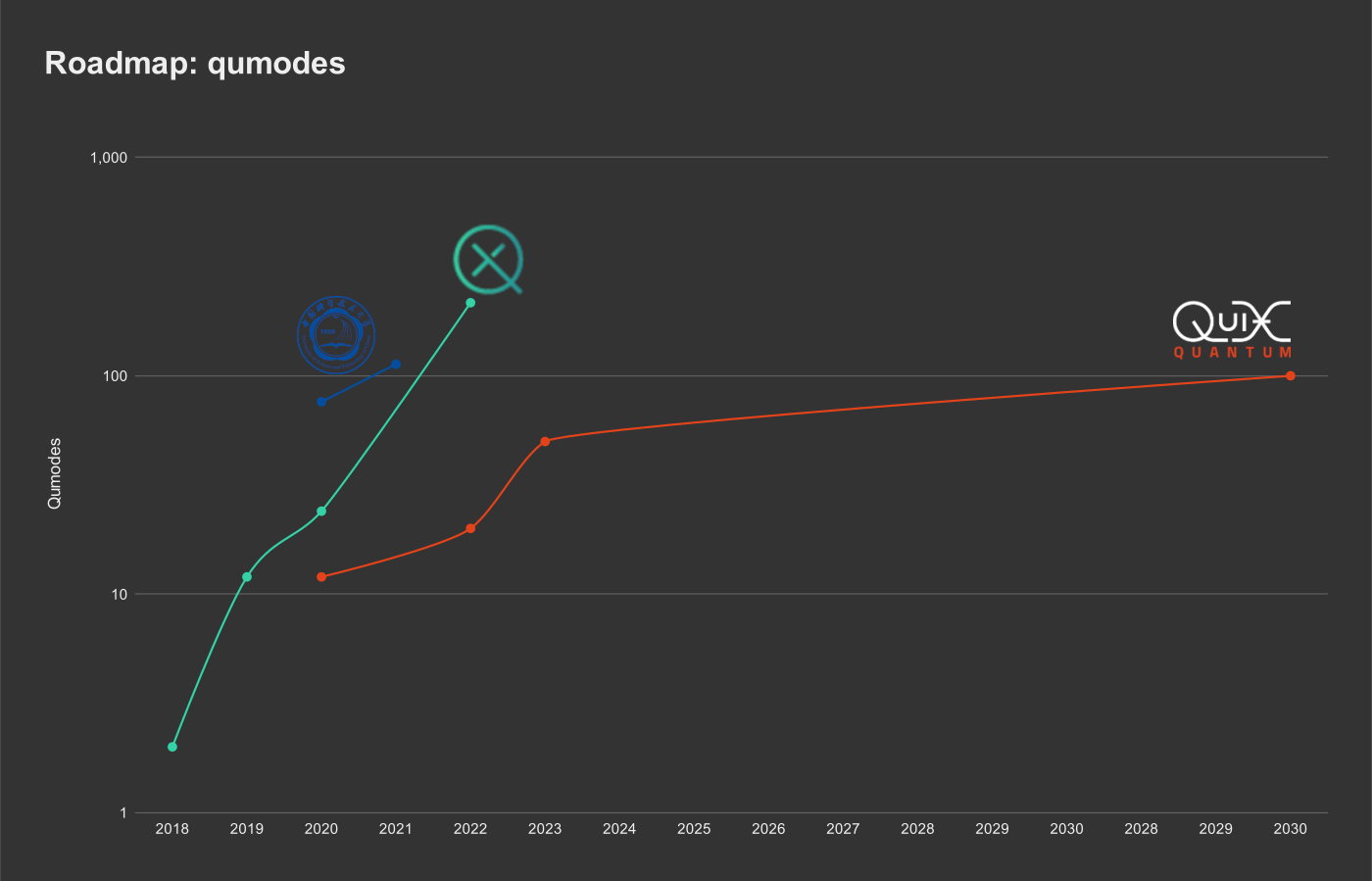

Qumodes

Photonic quantum computers have a few obvious benefits: they operate at room temperature (except for perhaps photon detectors that can easily be separated and connected to the chip with an optical fibre) and because photons rarely interact with each other or their surroundings, they are not as delicate when it comes to decoherence.

In photonic quantum computers that rely on beams of light, qumodes replace qubits. Each qumode is a single quantized beam of (squeezed) light. Whereas each qubit has only two (discrete) basis states (|0⟩ and |1⟩), a single qumode has a continuous spectrum of states, because it can contain an arbitrary number of photons, although in practice qumodes are made of a finite number of photons.

Why is PsiQuantum listed under the physical qubits and not in the qumodes chart?

Unlike QuiX Quantum and Xanadu, PsiQuantum and their industrial partner GlobalFoundries rely on individual photons rather than beams.

The former is a discrete approach like qubits, while the latter leads to (continuous-variable) qumodes.

Conclusion

Quantum advantage for a large variety of use cases is expected between 2030 and 2040, possibly before 2035. Doubtful yet even more optimistic estimates see quantum advantage happen already before the end of this decade.

While physical qubits, logical qubits, and quantum volume all gloss over the realities of noisy, imperfect hardware of coherence times, qubit connectivity (nearest neighbour vs full), 1-, and 2-gate fidelities, circuit depth (layers), and so on, various benchmarks exist to offer better comparisons among architectures and technologies. Still, benchmarks are not without their issues either, and it is rare to encounter manufacturers who publish multiple, realistic results from such benchmarks.

It is clear that NISQ-era machines will already achieve quantum advantage, ahead of the advent of universal fault-tolerant quantum computers. It is therefore time to get ready for the revolution and learn quantum computing today.